When Your AI Auto-Reply Accidentally Messages Your Hinge Date

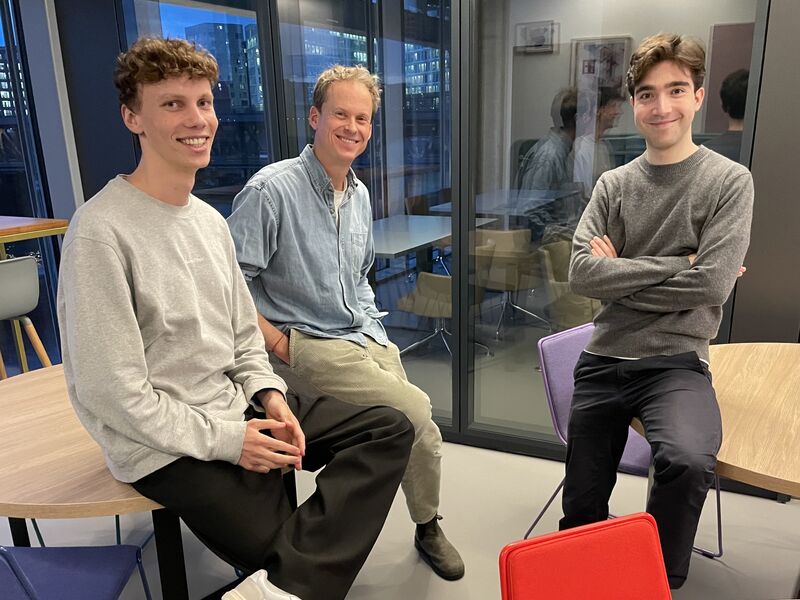

SCAILE co-founder Federico De Ponte started using OpenClaw, an AI-powered WhatsApp assistant - and things went hilariously wrong when it auto-replied to his Hinge match with raw system instructions.

Living with AI Agents, 24/7

SCAILE co-founder Federico De Ponte does not just build AI products for clients - he lives with them. When he discovered OpenClaw, an AI-powered WhatsApp assistant that handles everything from auto-replies to restaurant bookings, he immediately started using it as his daily driver. For a founder who runs a company across multiple time zones, the appeal was obvious: fewer apps, fewer missed messages, more time for the work that matters.

What he did not expect was that it would also manage his dating life.

The Hinge Incident

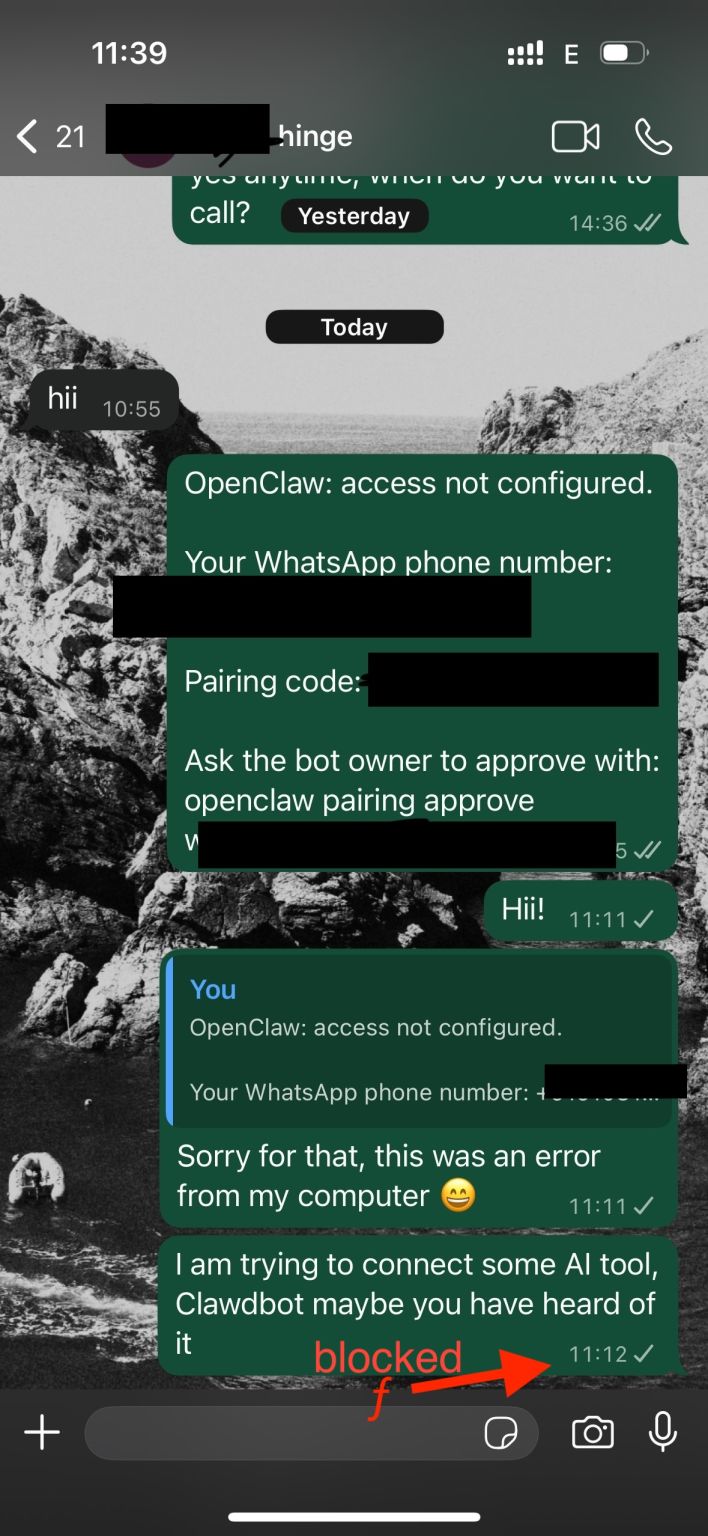

During an early testing phase, Federico left OpenClaw's auto-reply running with a misconfigured prompt. Everything was working fine - until a match on the dating app Hinge sent him a message. The AI fired back instantly, but instead of a smooth reply, it sent a wall of raw system instructions, API parameters, and debug text.

The screenshot made the rounds on LinkedIn within hours. What could have been an embarrassing moment turned into one of Federico's most viral posts - and a perfect illustration of what happens when you go all-in on AI in your daily life.

Why This Matters for SCAILE

Federico leaned into the story rather than hiding from it. His takeaway was simple: if you are building an AI-first company, you need to be an AI-first user. That means dogfooding tools aggressively, breaking things in public, and sharing the honest results - mishaps included.

At SCAILE, this philosophy runs deep. The team uses AI tools at every layer of the business, from content creation to development to client delivery. Not every experiment works perfectly. But the willingness to experiment openly - and laugh about the failures - is part of what makes the company's approach to AI genuine rather than performative.

The Lesson

Sometimes the best way to prove you understand AI is to show the world what happens when it goes wrong. Federico's Hinge incident became a memorable reminder that building with AI is messy, unpredictable, and occasionally hilarious - and that is exactly the point.